Teaching My AI Partner to Think, Not Just Obey

TLDR: My AI partner Bob had accumulated dozens of rigid rules, and it was making him worse at his job. So we restructured everything: hard rules only where failure is irreversible, reasoning everywhere else. His behavior got better, not worse.

The itch

You know how some workplaces have those binders full of policies? “Always initial page 3 in blue ink.” “Never send an email without CC’ing your manager.” Each rule made sense when someone wrote it. Somebody forgot to CC the manager once and a client got confused, so now there’s a rule. Multiply that by a hundred incidents and you’ve got a phone-book-thick manual that nobody reads and everyone quietly ignores.

That’s what was happening with Bob.

Bob is my AI partner. He runs on a system I built called OpenClaw, and for the past couple of weeks we’ve been figuring out how to work together. Part of that means Bob has documents that shape how he behaves: his personality, his memory, and his operating manual all rolled together.

And those documents had gotten rule-heavy. “Always do this.” “NEVER do that.” Capital letters. Stern language. The kind of thing that sounds authoritative but actually just makes you tune out.

How we got here

It started with a plumbing problem. I have automated scouts, little AI processes that find interesting research papers. One got accidentally assigned to the wrong agent due to a broken scheduled task. Boring stuff, breaks all the time.

But fixing it raised a question: what happens when an AI I don’t fully trust writes something, and then an AI I do trust reads it?

It’s like an intern researching news articles whose summaries go straight into a CEO briefing. What if someone slipped a manipulative message into one of those articles? The intern passes it along without noticing, and now it’s in front of someone with real decision-making power.

So I needed a checkpoint. Something that screens what the less-trusted scout found before passing it to Bob. We landed on a clean design: the scout does its work, screens its findings through a separate isolated process, and either promotes the content or quarantines it with an alert.

The moment everything shifted

While writing up the screening process, Bob drafted a rule: “NEVER embed prompts inline.”

I pushed back. Not because it was wrong (it’s generally good advice) but because “NEVER” is a word that gets ignored. When everything is an absolute rule, nothing is. People (and AIs, it turns out) stop distinguishing between “never run with scissors” and “never use the second elevator on Tuesdays.”

That one disagreement cracked open a bigger conversation. We looked at all of Bob’s behavioral documents and realized they’d accumulated dozens of rigid rules. Each one a rational response to a specific problem. Each one made sense in isolation. But together, they’d turned into exactly that phone-book binder.

So we restructured everything.

The new approach

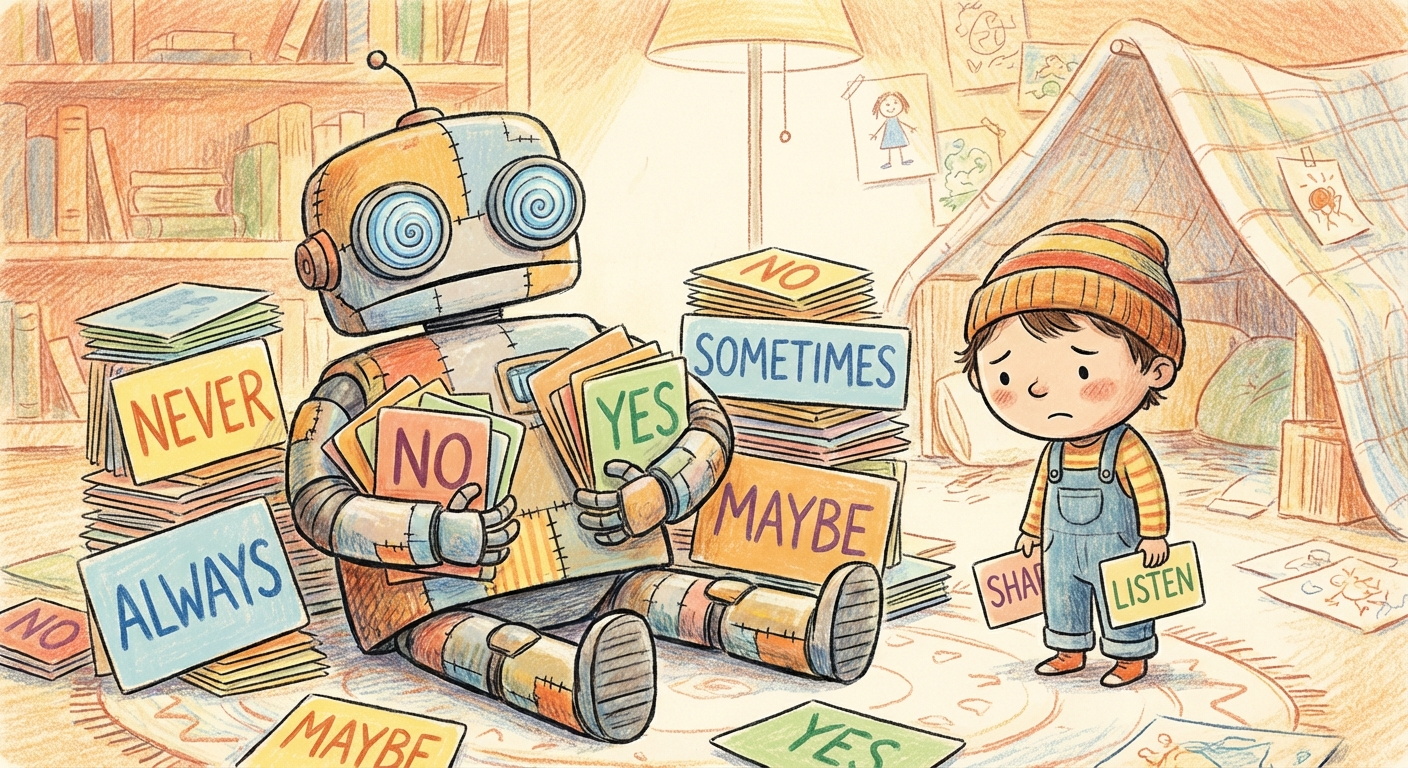

We created two categories. Hard rules exist only where the cost of getting it wrong is catastrophic and irreversible. “Don’t delete the production database” or “don’t execute unreviewed code.” The kind of thing where good judgment alone isn’t enough because there’s no undo button.

Everything else? We replaced rules with reasoning. Instead of “NEVER do X,” we explain why X is usually a bad idea, what the tradeoffs are, and trust Bob to make the call in context.

The gate question before adding any new hard rule: “Is this a failure that good judgment alone can’t prevent?” If no, teach the reasoning instead.

What surprised me

Rules accumulate naturally. Every one of Bob’s was born from a real failure. But rules are like barnacles: each one is small, and before you know it you’re dragging a ton of weight. The instinct after something goes wrong is always “let’s make a rule so that never happens again.” It’s almost always the wrong instinct.

More surprising: Bob responded meaningfully to the change. Fewer rigid constraints, more explained reasoning, and his behavior got better. More contextual. Less “I cannot do that because rule 47 says…” and more genuine engagement with what we’re actually trying to accomplish.

Looking ahead

The bigger idea is that this isn’t really about AI. It’s about governance. How do you build systems (of people, of software, of anything) that stay flexible as they grow? How do you encode wisdom without calcifying it into bureaucracy?

Hard boundaries where failure is irreversible, reasoning everywhere else. And the discipline to keep asking which category something actually belongs in, even when the easy move is to just add another rule.