Building a Second Brain That Argues With Me

TLDR: I consume a ton of content across every format — articles, podcasts, YouTube videos, conversations — and couldn’t remember what I actually thought about any of it. So I built a system that processes everything I consume, finds connections I’d miss on my own, and actively challenges my existing beliefs. It changed how I think, not just how I store information.

The problem

I consume a lot. Articles, newsletters, research papers, YouTube videos, podcasts, conversations with smart people. Hundreds of pieces of content a year, across every format imaginable. And I kept having this experience: someone would mention a topic and I’d think “I read something about that… six months ago… I think I disagreed with part of it?” But I couldn’t remember the specifics. The insight was gone.

I tried the usual things. Bookmarks. Notion databases. Saving articles to read-later apps. The result was always the same: a growing pile of stuff I’d saved but never revisited. A digital junk drawer that made me feel productive without actually being useful.

The real problem wasn’t storage. I could store anything, anywhere, for free. The problem was that none of these tools helped me think. They were filing cabinets. I needed something that could look at everything I’d read and say “hey, this thing you read about AI pricing last week contradicts what you believed about AI commoditization three months ago.” I needed a system that could find the connections I was too scattered to notice, and then push back on my own thinking.

What I actually built

I built a personal knowledge system. At its core, it’s pretty simple: when I come across something interesting — an article, a YouTube video, a podcast episode, a conversation, anything — I send it to my AI partner Bob (more on him in another post). He processes it regardless of format, extracts the key ideas, and files a structured note. That part takes about 30 seconds of my time.

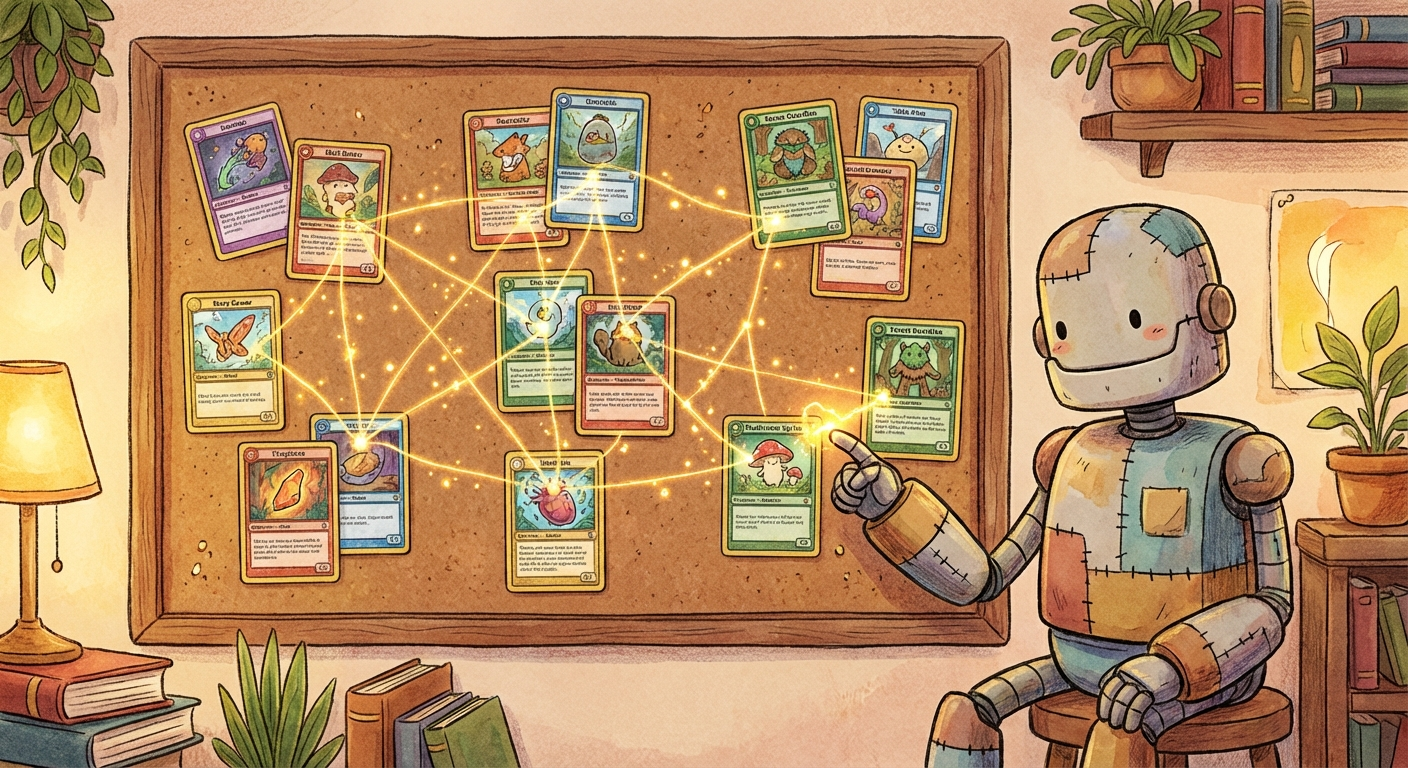

But the filing is just the beginning. Every night, the system runs a synthesis process. It takes all my notes, compares their ideas using the same kind of math that powers search engines, and looks for connections across different topics. An article about AI agent pricing from a tech newsletter might connect to an observation about enterprise software moats from a completely different source. The system finds these overlaps and writes up what it sees.

The part I’m most excited about is what I call living theses. Instead of just collecting notes, the system maintains a set of positions I currently hold about the world. Things like “AI capabilities become free within two years” or “boring, repetitive workflows are where AI creates the most value.” Each thesis tracks the evidence for and against it, with a confidence score.

When I read something new, the system checks: does this support or challenge any of my existing theses? If a new article contradicts something I believe, it gets flagged. If I’ve been holding a position with high confidence but zero counterevidence, the system tells me I might be in a blind spot. It runs a weekly audit and generates research questions to pressure-test the weakest points in my thinking.

In other words, it argues with me. On purpose.

What surprised me

I expected the value to come from better retrieval. Ask a question, get a relevant note, save time. That’s useful, but it turned out to be the least interesting part.

The real value is in the connections. I keep finding links between ideas from completely different domains that I never would have made on my own. An article about how Stripe runs autonomous coding agents connects to a thesis about boring workflows connecting to an observation about enterprise sales cycles. These cross-domain links are where the interesting thinking happens, and they’re exactly what a human brain is bad at tracking across hundreds of sources.

The other surprise was how much the thesis system changed my reading habits. When you know that everything you read gets evaluated against your existing beliefs, you start reading differently. You notice when you’re just collecting evidence that confirms what you already think. You start seeking out things that might prove you wrong, because that’s where the system (and your thinking) gets stronger.

How it works, roughly

For the curious: the notes are plain markdown files, stored locally, synced to the cloud, and viewable in a notes app. Nothing proprietary. If the system disappeared tomorrow, I’d still have a folder of well-organized notes.

The intelligence layer runs on top. Each note gets converted into a mathematical representation (an embedding) that captures its meaning. The system compares these representations to find notes that are conceptually similar, even if they use completely different words. A note about “enterprise sales cycles shortening when ROI is obvious” might match with a note about “AI agent value in measurable workflows,” because the underlying concepts overlap.

The synthesis process uses a large language model to read pairs of related notes and write up what connects them, where they disagree, and what questions they raise together. The thesis auditor scores each position on confidence, evidence count, staleness, and whether it’s been challenged recently.

Everything runs automatically. I just keep reading and sharing interesting things. The system does the rest.

What’s next

I’m working on closing the loop: when the thesis audit finds a weak spot in my thinking, it should automatically go research the question and bring back what it finds. Right now it generates the questions but I still have to go find the answers manually. Soon it’ll do that too.

I’m also thinking about how to make the theses themselves shareable. Right now they live in my private system, but the idea of publicly maintained positions with tracked evidence and confidence scores feels like it could be interesting for more people. Imagine being able to see someone’s actual track record of predictions and belief updates, not just their latest hot take.

But mostly I’m just enjoying having a system that makes me a better thinker. Consuming hundreds of pieces of content used to mean hundreds of forgotten insights. Now it means a growing web of connected ideas that keeps getting smarter. Including about the things I might be wrong about.